MultiMediate 2023

Here, we introduce the different challenge tasks, evaluation methodology and rules for participation.

Baseline approaches are available at https://git.opendfki.de/philipp.mueller/multimediate23

Bodily Behaviour Recognition

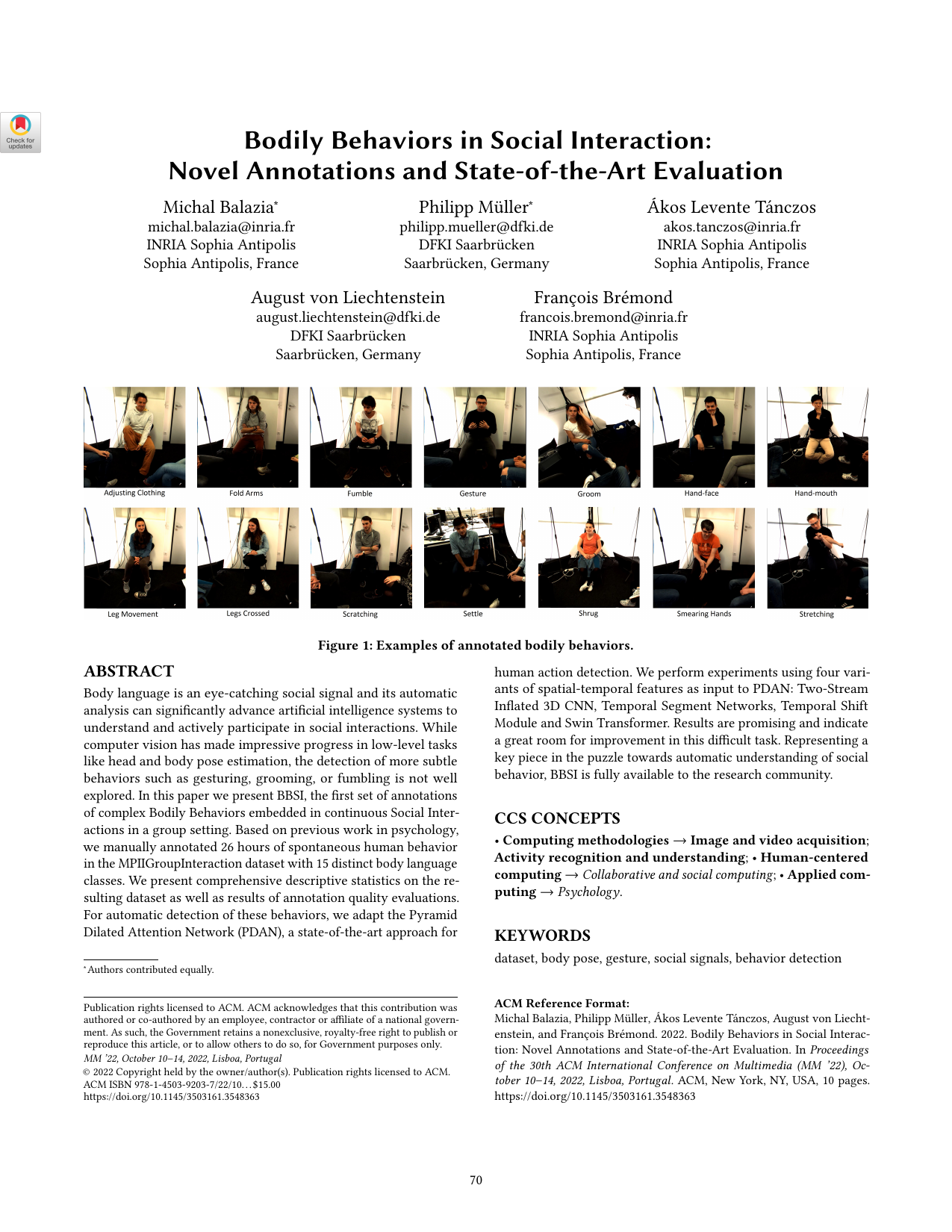

Bodily behaviours like fumbling, gesturing or crossed arms are key signals in social interactions and are related to many higher-level attributes including liking, attractiveness, social verticality, stress and anxiety. While impressive progress was made on human body- and hand pose estimation the recognition of such more complex bodily behaviours is still underexplored. With the bodily behaviour recognition task, we present the first challenge addressing this problem. We formulate bodily behaviour recognition as a 14-class multi-label classification. This task is based on the recently released BBSI dataset (Balazia et al., 2022). Challenge participants will receive 64-frame video snippets as input and need output a score indicating the likelihood of each behaviour class being present. To counter class imbalances, performance will be evaluated using macro averaged average precision.

Engagement Estimation

Knowing how engaged participants are is important for a mediator whose goal it is to keep engagement at a high level. Engagement is closely linked to the previous MultiMediate tasks of eye contact- backchannel detection. For the purpose of this challenge, we collected novel annotations of engagement on the Novice-Expert Interaction (NoXi) database (Cafaro et al., 2017). This database consists of dyadic, screen-mediated interactions focussed on information exchange. Interactions took place in several languages, and participants were recorded with video cameras and microphones. The task includes the continuous, frame-wise prediction of the level of conversational engagement of each participant on a continuous scale from 0 (lowest) to 1 (highest). Participants are encouraged to investigate multimodal as well as reciprocal behaviour of both interlocutors. We will use the Concordance Correlation Coefficient (CCC) to evaluate predictions.

Continuing MultiMediate Tasks

In addition to the two tasks described above we also invite submission to the previous tasks included in MultiMediate’21 and MultiMediate’22.

Backchannel Detection (Multimediate'22 task)

Backchannels serve important meta-conversational purposes like signifying attention or indicating agreement. They can be expressed in a variety of ways - ranging from vocal behaviour (“yes”, “ah-ha”) to subtle nonverbal cues like head nods or hand movements. The backchannel detection sub-challenge focuses on classifying whether a participant of a group interaction expresses a backchannel at a given point in time. Challenge participants will be required to perform this classification based on a 10-second context window of audiovisual recordings of the whole group. Approaches will be evaluated using classification accuracy.

Agreement Estimation (Multimediate'22 task)

A key function of backchannels is the expression of agreement or disagreement towards the current speaker. It is crucial for artificial mediators to have access to this information to understand the group structure and to intervene to avoid potential escalations. In this sub-challenge, participants will address the task of automatically estimating the amount of agreement expressed in a backchannel. In line with the backchannel detection sub-challenge, a 10-second audiovisual context window containing views on all interactants will be provided. Approaches will be evaluated using mean squared error.

Eye Contact Detection (MultiMediate’21 task)

We define eye contact as a discrete indication of whether a participant is looking at another participant’s face, and if so, who this other participant is. Video and audio recordings over a 10 second context window will be provided as input to provide temporal context for the classification decision. Eye contact has to be detected for the last frame of the 10-second context window. In the next speaker prediction sub-challenge, participants need to predict the speaking status of each participant at one second after the end of the context window. Approaches will be evaluated using classification accuracy.

Next Speaker Prediction (MultiMediate’21 task)

In the next speaker prediction sub-challenge, approaches need to predict which members of the group will be speaking at a future point in time. Similar to the eye contact detection sub-challenge, video and audio recordings over a 10 second context window will be provided as input. Based on this information, approaches need to predict the speaking status of each participant at one second after the end of the context window. Approaches will be evaluated using unweighted average recall.

Evaluation of Participants’ Approaches

Training and validation data for each sub-challenge can be downloaded at multimediate-challenge.org/Dataset/. The evaluation of these approaches will then be performed remotely on our side with the unpublished test portion of the dataset. We will provide baseline implementations along with pre-computed features to minimise the overhead for participants. For the tasks newly included in this years’ challenge, the test set (without ground truth) will be released two weeks before the challenge deadline. Participants will in turn submit their predictions on for evaluation (details will follow). For the MultiMediate’21 and ‘22 tasks, test sets are already published and evaluations can be conducted by sending predictions to Michael Dietz ().

We will evaluate approaches with the following metrics: accuracy for backchannel detection and eye contact estimation, mean squared error for agreement estimation from backchannels, and next speaker prediction is evaluated with unweighted average recall.

Evaluation is performed by submitting predictions to Eval.ai. Please do the following:

- Create an account here:https://hcai.eu/challenges/auth/signup

- Login with your account here: https://hcai.eu/challenges/auth/login

- Create a new participant team here:https://hcai.eu/challenges/web/teams

- Join the challenge with your participant team here:https://hcai.eu/challenges/web/challenges/challenge-page/25/participate

- Submit your results here:https://hcai.eu/challenges/web/challenges/challenge-page/25/submission

https://www.perceptualui.org/closed-area/MPIIGroupInteraction-Complete-Dataset/clips_test.zip

https://www.perceptualui.org/closed-area/MPIIGroupInteraction-Complete-Dataset/openface_test.zip

https://www.perceptualui.org/closed-area/MPIIGroupInteraction-Complete-Dataset/openpose_test.zip

Rules for participation

- The competition is team-based. A single person can only be part of a single team.

- For bodily behaviour recognition and engagement estimation tasks, each team will have 5 evaluation runs on the test set (per task).

- For the tasks that were already included in Multimediate’21 and Multimediate’22, three evaluations on the test set are allowed per month. In July 2023, we will make an exception and allow for five evaluations on the test set.

- Additional datasets can be used, but they need to be publicly available.

- The Organisers will not participate in the challenge.

- For awarding certificates for 1st, 2nd and 3rd place in each subchallenge we will only consider approaches that are described in accepted papers that were submitted to the ACM MM Grand Challenge track.

- The evaluation servers will be open until the paper submission deadline (14 July 2023).

- The test set (without labels) will be provided to participants 2 weeks before the challenge deadline. It is not allowed to manually annotate the test set.